Recently I have been dwelling with a lot of NLP problems and jargons. The more I read about it the more I find it intriguing and beautiful of how we humans try to transfer this knowledge of a language to machines.

How much ever we try because of our laid back nature we try to use already existing knowledge or existing materials to be used to make machines understand a given language.

But machines as we know it can only understand digits or lets be more precise binary(0s and 1s). When I first laid my hands on NLP this was my first question, how does a machine understand that something is a word or sentence or a character.

I am still a learner in this field(and life 😝) but what I could understand information that we are going to use has to be converted into binary or some kind of a numerical representation for a machine to understand.

There are various ways to “encode” this information into numerical form and that is what is called word embeddings.

What are word embeddings?

Word embedding is the collective name for a set of language modeling and feature learning techniques in natural language processing (NLP) where words or phrases from the vocabulary are mapped to vectors of real numbers. Conceptually it involves a mathematical embedding from a space with many dimensions per word to a continuous vector space with a much lower dimension.

Wikipedia

In short word embedding is a way to convert a textual information into numerical form so that it can help us analyse it.

Analysis like similarity between words or sentences, understand the context in which a phrase or word is being spoken etc.

How are they formed?

Lets try to convert a given sentence into a numerical form:

A quick brown fox jumps over the lazy dog

How do we convert the above sentence into a numerical form such that our machine or even we can perform operations on it. And its hard to figure out the mathematics of language but we can always try.

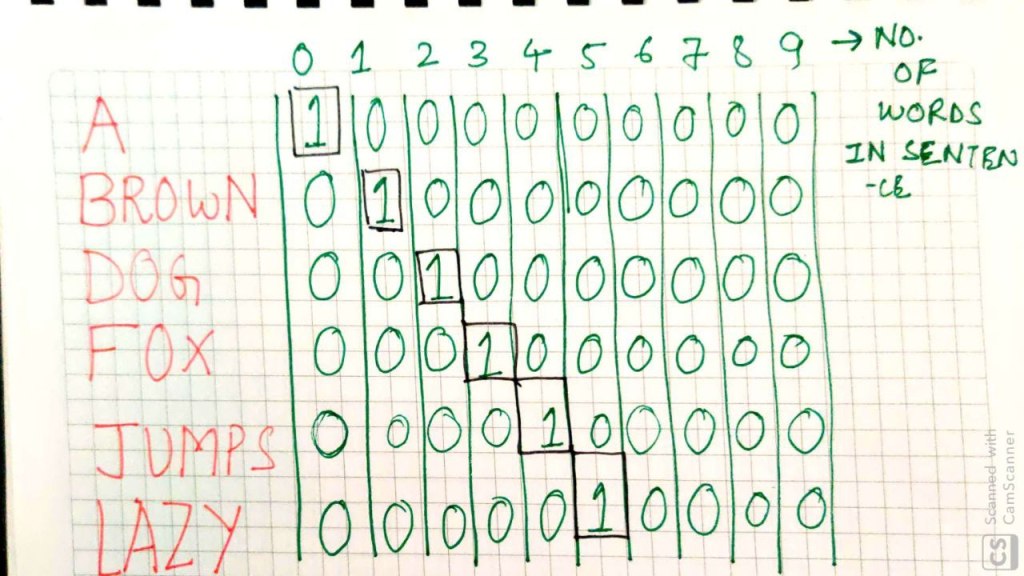

So lets try, what we can do is, get all unique words and sort the words in the sentences and then makes a list of them. But then how do we get a numerical representation for it. It’s time for us to visit our long lost friend – Matrix.

Let’s get the words in proper order i.e unique and sorted

Now we will try to convert these words into numerical form using some matrix concepts(mostly representation) so that we can make a word look different from another word.

If you see there are totally 10 words and so we took 10 blocks to represent it. In a more mathematical term each representation is called a vector and the dimension of this vector is 1 x 10. So each word in this universe can be represented by a vector of that dimension and we can now carry operations on it to get our desired result.

Few prominent operations are how similar are two vectors or how different are two vectors. We can dive into that later.

Now the method that we just followed is a very brute force way of doing this and is officially called as One-Hot Encoding or Count Vectorizing.

Why we do this?

Now the way we encoded above words can be really useless because it’s just a representation and it doesn’t have any other idea so we don’t know how two words are related or are they morphologically similar etc.

The prime reason we want to have encoding is to find similar words, gauge the context of the topics etc.

There are various other techniques which actually produce intelligent embeddings that has an idea about what is going on.

As Hunter puts it

When constructing a word embedding space, typically the goal is to capture some sort of relationship in that space, be it meaning, morphology, context, or some other kind of relationship

and a lot of other embeddings like Elmo, USE etc. does a good job at that.

As we go ahead and explore more embeddings you will see it goes on becoming more complex. There are layers of training models introduced etc.

We even have sentence embeddings which are way different from just word embeddings.

Conclusion

This was just a tip of the iceberg or may be not even that but I thought it will be helpful for someone who is starting their exploration because it took time for me to get around this concept. Thanks a lot for reading.

Happy Hacking!

References:

http://hunterheidenreich.com/blog/intro-to-word-embeddings/

https://towardsdatascience.com/document-embedding-techniques-fed3e7a6a25d?gi=7c5fcb5695df